The AI Agent node is the central component for creating autonomous conversations powered by artificial intelligence in Brain Studio. It allows you to configure an agent that processes user messages, queries knowledge bases, executes tools, and generates contextual responses autonomously. The configuration panel is organized into four tabs:Documentation Index

Fetch the complete documentation index at: https://docs.jelou.ai/llms.txt

Use this file to discover all available pages before exploring further.

- General — Model and instructions

- Tools — Native, custom, and MCP tools

- Context — Initial message, conversation history, and external knowledge

- Advanced — Save response, fallback model, security, expiration, DLP, and more

General

The General tab contains the essential agent configuration.Model

Select the language model (LLM) the agent will use to generate responses. Consider latency, cost, and task complexity before making your selection. Available models include: OpenAI- GPT 4.1 Mini: a simplified variant of GPT-4.1 optimized for fast responses with lower resource demands.

- GPT 4.1: a refined evolution of GPT-4, with better understanding, reasoning, and accuracy.

- GPT 4-o (Azure): a GPT 4-o version hosted on Azure, focused on stability and performance in enterprise environments. Supports vision.

- GPT 4-o Mini: a faster, lighter version of GPT 4-o, oriented toward speed-first use cases. Supports vision.

- GPT 5.2: OpenAI’s latest-generation model with advanced reasoning, larger context, and high precision on complex tasks.

- Claude 3.5 Sonnet: excellent for complex tasks requiring more elaborate text and extensive contexts.

- Claude 4 Sonnet: high reasoning and analysis capability.

- Claude 4.6 Sonnet: Claude’s latest generation, with improved reasoning and greater accuracy.

- Gemini 2.5 Flash: fast processing with multimodal capabilities. Supports vision.

- Gemini 2.5 Pro: advanced reasoning with multimodal capabilities. Supports vision.

- Gemini 3 Flash: latest generation of Gemini with optimized multimodal processing. Supports vision.

- Llama 4 Scout: agile, low-latency model, ideal for quick ideas and lightweight interactions.

- Llama 4 Maverick: high-performance model designed for demanding reasoning and multi-step problem solving.

You can also add custom models using the “Add model” button. This allows you to connect your own or third-party models that are not in the predefined list.

Instructions

Define the base behavior of the agent through a system prompt. The instructions determine what role it adopts, what tone it uses, and what steps it follows before responding. Make sure they are concise, concrete, and free of ambiguity. Instructions support variable interpolation using the{{$variable}} syntax, which allows you to dynamically customize behavior based on the conversation context.

The field includes a character counter that adjusts based on the selected model, since each model has a different maximum limit.

See prompting recommendations and examples

Tools

The Tools tab allows you to add tools that extend the agent’s capabilities beyond text generation. The agent autonomously decides when to invoke each tool based on the conversation context. To add tools, click the ”+ Add tools” button and select the tools you need.Native tools

These are predefined tools built into the platform. They are marked with the text “(Native)” in the selector:| Tool | Description |

|---|---|

| Product search | Queries the product catalog configured in the bot |

| Send interactive message | Renders buttons, lists, and quick replies to the user |

| Send Call to Action | Sends CTA buttons with URL, with WebView support |

| Current date and time | Gets the current date and time for a specific timezone |

| Day of the week | Calculates the day corresponding to a date |

| Transfer to agent | Transfers the conversation to a human agent or support queue |

Custom tools

Tools created by the user from Brain Studio. When adding a custom tool you can configure:- Name and description that the model uses to decide when to invoke it.

- Custom input parameters.

- Action on execution: behavior after the tool has been executed.

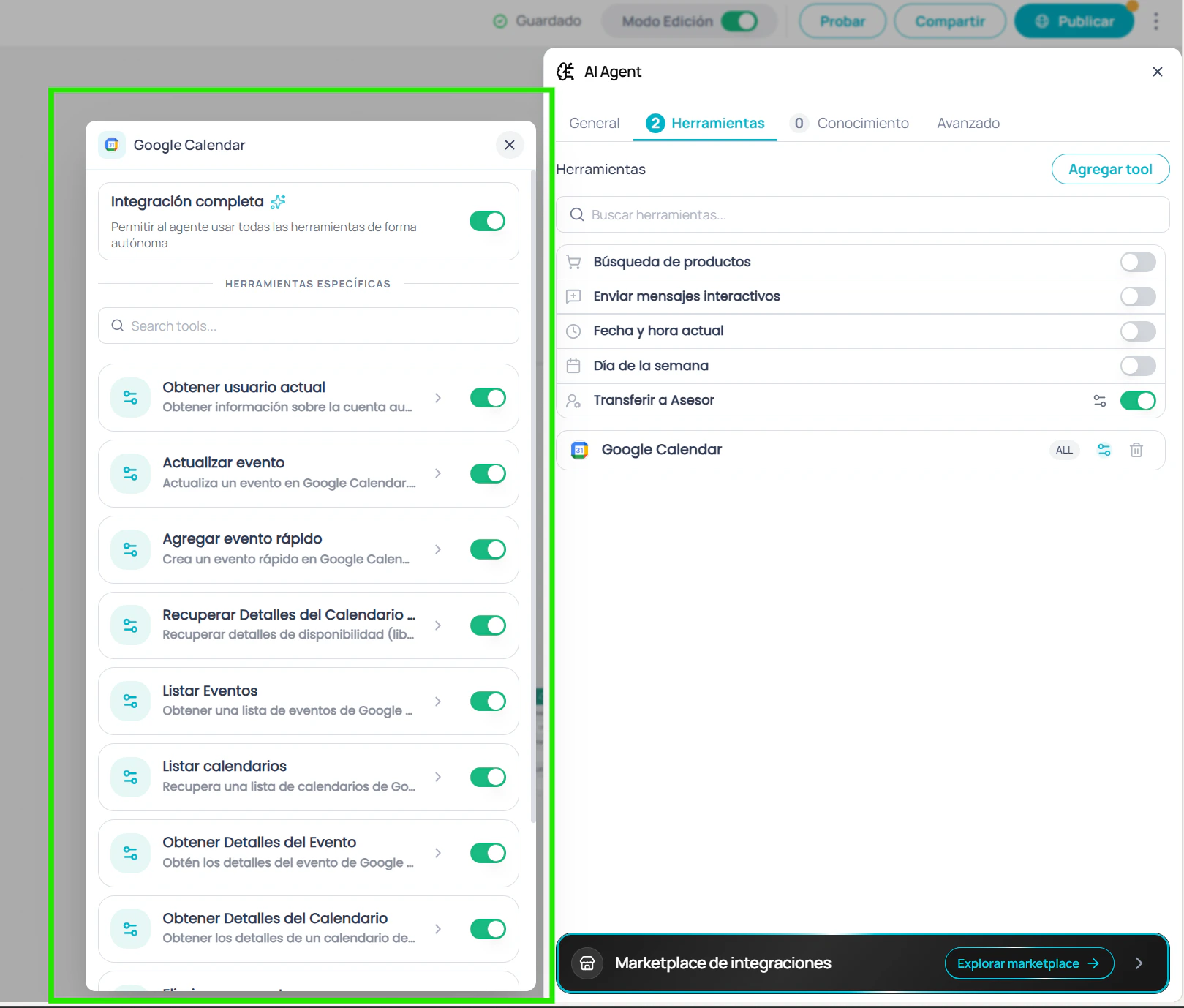

MCP Tools (Model Context Protocol)

Integrate external services using the MCP standard. There are two types of integration:Native MCP apps

Applications from the Jelou marketplace that are installed directly on the platform. Each MCP app can be configured in two modes:- Integration mode: enables all of the app’s tools at once.

- Granular mode: allows you to select individual tools from the app and configure each one separately.

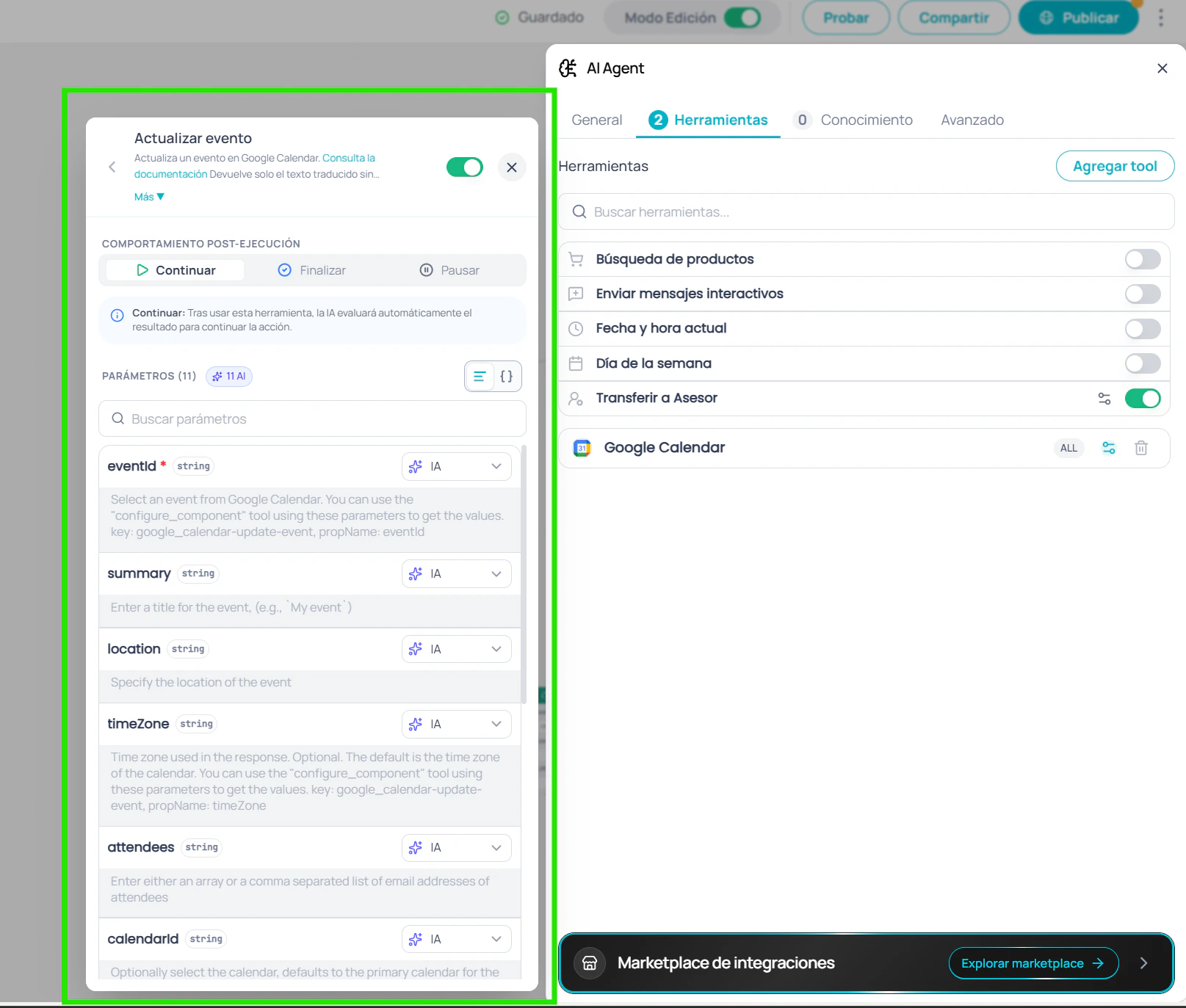

Default parameters

In granular mode, each individual tool allows you to configure default parameters. Click on a tool to open its detail view, where you can:- View all tool parameters with their type, description, and whether they are required.

- Set default values that the agent will use on every invocation, instead of letting the AI decide.

- Use the variable selector to inject dynamic values from the flow (e.g.,

{{$context.email}}). - Toggle between form view and JSON editor to edit all parameters at once.

- Search parameters by name when the tool has many.

| Indicator | Meaning |

|---|---|

| Manual | Has a value set by you — the agent will always use that value |

| Auto | No value set — the agent decides the value on each invocation |

External MCP servers

Connections to your own MCP servers via custom URL.Add headers (optional)

Configure custom headers (name and value) for authentication or other metadata.

Tool actions

Each tool (native, custom, or MCP) can configure an action that runs after it is invoked:| Action | Behavior |

|---|---|

| None | The agent continues the conversation normally |

| End function | The workflow execution ends after using the tool |

| Pause interaction | The conversation pauses until it is resumed externally |

Context

The Context tab centralizes the configuration of the agent’s input context: how each conversation starts, how many previous messages it remembers, and what knowledge sources it consults to generate its responses.Initial message

Defines the text the agent receives as the first user turn when the node begins executing. This setting determines what information the agent starts with to generate its first response.| Option | Behavior | When to use |

|---|---|---|

| Pass the last message | The agent receives the last message in the conversation as the initial input | Most cases: customer service, technical support, general queries |

| No user message | No user turn is sent to the model; the agent responds based only on its system instructions | When there is no relevant user message to pass to the agent (HSM campaigns, automated workflows, post-data collection) |

| Custom | Shows a text field with a variable selector to define a custom value | When you need to combine variables or send specific context to the agent |

Use case examples

Pass the last message — Customer service

A user writes “What are the business hours?” and the agent receives it directly as input, generating a response based on its knowledge base.

No user message — When there is no real user query

Use this option when the last available message does not represent a real user query. Common scenarios:

- Post-HSM campaign: the user responded to a WhatsApp template by tapping a button like “Yes, I’m interested”. That payload is not a query — the agent should start from its instructions and the flow’s context.

- Automated workflows: the flow was triggered by a webhook, scheduler, or external API. There is no user message because no user wrote anything.

-

Post-data collection: previous Input nodes already collected name, order number, etc. The last message is a data point (e.g.,

"ORD-12345"), not a question. The agent should respond based on the information already stored in memory.

In this mode, the agent generates its first response based solely on its system instructions. If you need the agent to greet the user, configure it in the agent’s instructions.

Custom — Tailored context with variables

Allows you to build an input message by combining flow variables. For example, to pass context information to the agent:

When selecting Custom, the field supports variable interpolation with the

{{$variable}} syntax and has a 100-character limit. Use the variable selector to explore the available variables in your flow.Remember previous messages

When enabled, the agent includes as context the last N messages of the conversation each time it starts. This allows maintaining conversational coherence when the user resumes a session after an interval.Upload block

The unified upload block accepts documents and images in the same interaction. You can add files in three ways:- Drag and drop files directly onto the block

- Click on the block to open the file selector

- Paste a URL in the link field at the bottom of the block (supports the same document and image formats listed below)

| Type | Supported formats | Maximum size |

|---|---|---|

| Documents | .pdf, .xlsx, .md, .txt, .json, .csv | 2 MB per file |

| Images | .jpg, .jpeg, .png | 10 MB per file |

Documents are processed one at a time. If you select or drag multiple documents simultaneously, only the first one will be processed and you will receive a notice to upload the remaining ones individually. Images, on the other hand, are processed in batch.

Documents

When you upload a document, a panel opens where you define its name (maximum 30 characters) and an optional description that helps the agent understand the file’s content. Once uploaded, you can edit its metadata or delete it from the list.Images

Images are uploaded directly without additional steps and appear as thumbnails below the upload block. You can upload up to 3 images per agent.- Click a thumbnail to view it in full screen

- Hover over a thumbnail to see the delete button

Datastores

Connect Datum databases so the agent can query structured information in real time:- Select the datastore to connect

- Configure the allowed operations on the database

It is recommended to always load documents in the Context tab to anchor the agent’s responses to your business’s official documentation. Assign clear descriptions to each uploaded file to maximize effectiveness. The model will prioritize information from your files and datastores over its general knowledge, drastically reducing the risk of hallucinations.

External context

Allows resuming this node from an external system via the resume API. Useful for workflows that require external asynchronous processing, such as validations, payments, or approvals.The

executionId is available as {{$context.executionId}} within the flow and is valid for 24 hours.Advanced

The Advanced tab contains additional settings for finer control over agent behavior.Save response

Allows you to store the agent’s last response in a variable for use in subsequent nodes of the flow.

The variable will be available as

{{$context.ai_agent_response}} in subsequent nodes of the flow.

Fallback model

Select an alternative model that will be used automatically if the primary model fails or is unavailable.Processing options

Independent options to enable additional capabilities for messages the user sends during the conversation:| Option | Description |

|---|---|

| PDF support | Allows the agent to read and process PDF documents sent by the user during the conversation (text only, not images inside the PDF) |

| Read image URLs | Enables the agent to process images that the user sends as a URL with descriptive text during the conversation |

| Quick Reply Payload support | Allows the agent to interpret the payload of quick replies from interactive messages |

These options apply to files that the end user sends during the conversation. Images and documents you configure as agent context are managed from the Context tab.

Multi-message response

Splits the agent’s response into separate bubbles when it contains paragraph breaks (\n\n), media URLs, or lists. Image, video, audio, or document URLs are sent as independent media bubbles according to the channel, while numbered lists are kept together.

Enabled by default for agents created after April 24, 2026. Earlier agents keep the previous behavior (single bubble) until you manually enable the toggle.

Security (Guardrails)

Configure the agent’s level of protection against misuse, prompt injection, and out-of-scope requests.Select security level

Choose the preset that best fits your use case:

| Level | Description |

|---|---|

| Low | Minimum protection, greater response flexibility |

| Medium | Balance between security and flexibility (recommended) |

| High | Strict protection, restricts out-of-scope responses |

| Critical | Maximum protection, ideal for regulated contexts |

Expiration

Configure a time limit for the agent session. If the user does not respond within the configured time, the session expires automatically.DLP (Data Loss Prevention)

Enable automatic detection and masking of sensitive information in agent conversations.Select sensitive data types

Choose which types of information should be automatically detected and masked:

| Data type | Default replacement value |

|---|---|

| Credit card number | [CreditCardNumber] |

| Credit card track number | [CreditCardTrackNumber] |

| Email address | [EmailAddress] |

| Financial account number | [FinancialAccountNumber] |

| IP address | [IpAddress] |

| Location | [Location] |

| Geographic coordinates | [LocationCoordinates] |

| Phone number | [PhoneNumber] |

| Date | [Date] |

| Person name | [PersonName] |

Follow-up message

When enabled, allows the agent to send follow-up messages to keep the conversation active if the user does not respond.Configuration example

A typical configuration flow for a customer service agent:Configure General

Select GPT-4.1 as the model and write clear instructions defining the agent’s role, tone, and scope.

Add Tools

Enable Transfer to agent to escalate complex conversations, Product search if the agent handles catalog queries, and Send interactive message to show options to the user.

Configure Context

Select Pass the last message as the initial message, upload the FAQ document in PDF format, add reference images if the model supports vision, and connect the product datastore if applicable.